The Next Phase of AIGC: Focusing on Human-AI Collaboration Rate

In the current rush of AIGC (AI-Generated Content), blindly pursuing full automation often leads to exponential errors. This article proposes a standardized workflow based on HITL (Human-in-the-Loop) to address hallucination issues through three human gates and introduces the concept of “Human-AI Collaboration Rate” as a metric to help product managers and content creators find a balance between efficiency and quality.

With the popularity of large models, we see a dangerous trend: whether in enterprise applications or personal creations, everyone is frantically pursuing “full automation.” We attempt to construct a perfect prompt or agent, hoping that clicking “generate” will yield a flawless industry report or code project.

However, the reality is often harsh—outputs are either mediocre or completely nonsensical.

Why does full automation fail in complex tasks? As product managers, how can we design a standardized SOP that utilizes AI efficiency while ensuring output quality?

This article will introduce a HITL (Human-in-the-Loop) workflow and the core concepts of “Publishing Etiquette” and “Human-AI Collaboration Rate”, aiming to establish standards for knowledge production in the AI era.

Why HITL is Necessary: Error Amplification and “Hallucination Compound Interest”

In simple single-step tasks (like “help me write a leave request”), AI performs remarkably well. However, in long-chain complex tasks (like “writing a competitive analysis report”), fully automated processes without human intervention are impractical.

The core reason is that the context of real tasks cannot be 100% covered by prompts, and small deviations compound over multiple steps.

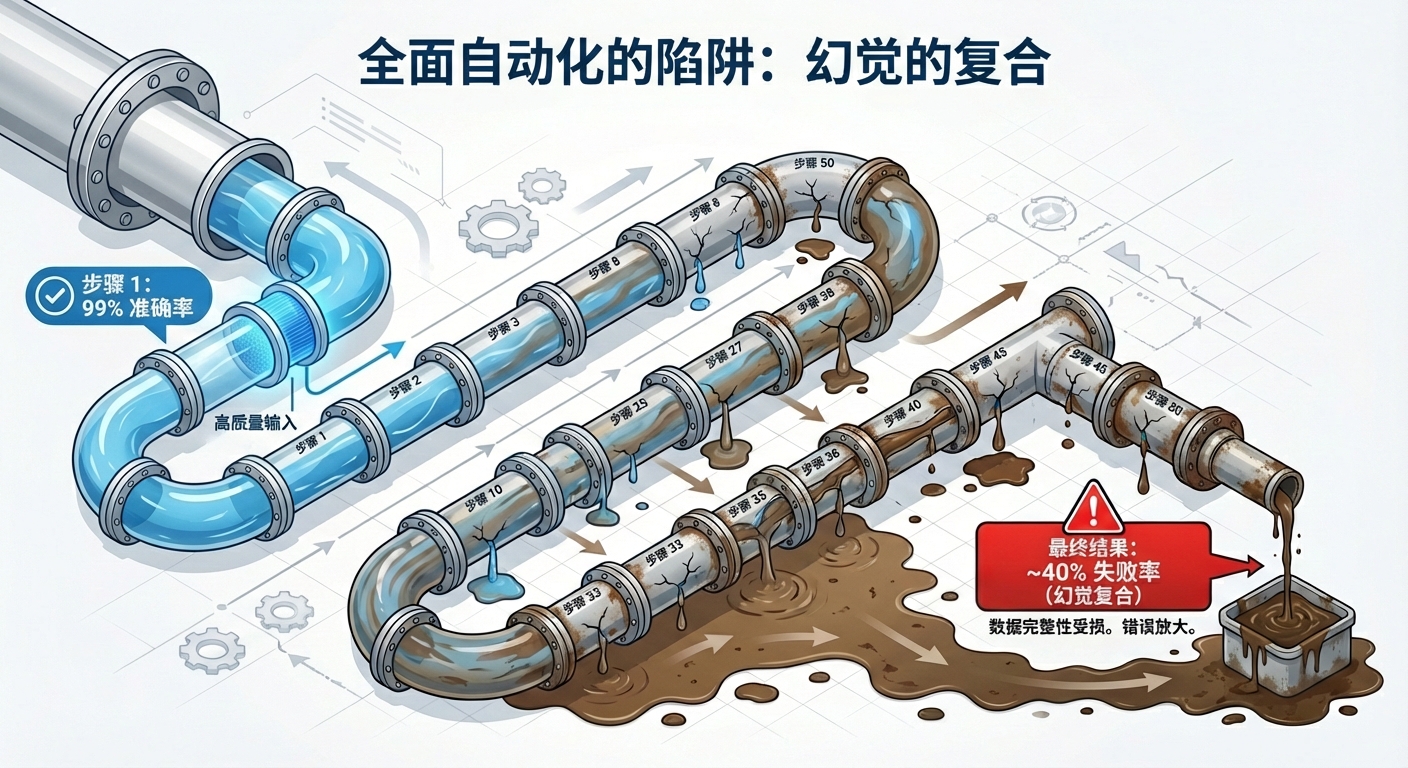

We can illustrate the terrifying nature of “hallucination compound interest” with a simple mathematical model:

Assuming we have built a fully automated agent process that requires 50 steps to complete a task. Even if the most advanced model can achieve a 99% accuracy rate per step (with a hallucination rate of only 1%), the final probability of a correct result after 50 uninterrupted steps is:

This means the failure rate of the final task is as high as 39.5%.

This is why we cannot blindly rely on automation. In multi-step reasoning, a small error in the previous step becomes an “erroneous premise” for the next step, ultimately leading to logical collapse.

Therefore, we need to introduce the HITL (Human-in-the-Loop) model.

- Human: Responsible for setting problems and boundaries → Identifying and correcting errors → Value/risk judgment → Final endorsement.

- AI: Responsible for data aggregation → Generating alternative solutions → Automating repetitive steps → Language and structure optimization.

AI processes information to make it “usable,” while humans ensure the output represents “my viewpoint.”

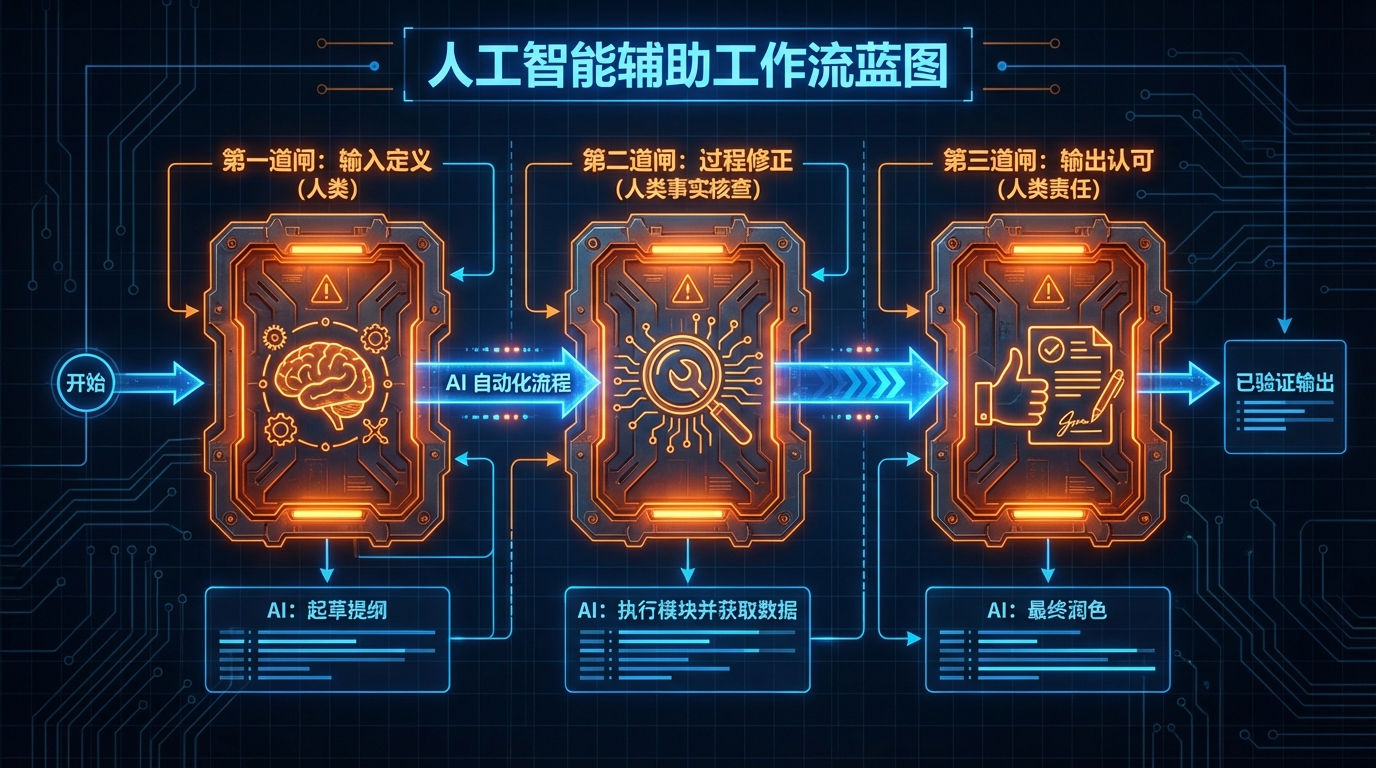

Implementing SOP: Setting Up Three Human Gates

To cut off error amplification, we need to set up three key “human gates” in the workflow:

First Gate: Input Layer’s “Problem Definition”

AI cannot guess your implicit intentions out of thin air. In the input phase, humans must complete the “definition” work.

- AI Action: Generate an outline based on vague instructions.

- Human Intervention: Confirm if the outline is off-topic? Are the constraints (like word count, tone, prohibited words) clear?

- Value: Prevent directional errors, which is the lowest-cost correction phase.

Second Gate: Process Layer’s “Identification and Correction”

This is the core of HITL. Do not let AI run through all 50 steps at once; instead, break it down into several milestones.

- AI Action: Execute specific modules (like “collecting competitive data”).

- Human Intervention: Check factual accuracy (Fact Check). If AI fabricates data, humans must correct it before proceeding to the next step.

- Value: Block “hallucination compound interest,” resetting the accuracy rate of each step to 100%.

Third Gate: Output Layer’s “Value Endorsement”

- AI Action: Generate the final polished text.

- Human Intervention: Compliance review, risk control, and most importantly—confirming “this is a viewpoint I am willing to take responsibility for.”

- Value: Build trust.

Redefining User Experience: Publishing Etiquette in the Generative Era

When we shift our perspective from “producers” to “consumers” or “users,” we discover another pain point: information overload and trust crisis.

Dr. Xiaohui from Tencent Research Institute proposed profound insights on “Proof of Thought”:

Before the generative era, writing was harder than reading, and the responsibility for “proof of thought” lay with the writer; reading was seen as an exchange with great minds.

In the generative era, writing has become extremely cheap. The responsibility for “proof of thought” has shifted to the reader—readers must expend significant effort to discern whether an article is AI-generated or a genuine insight from the author. Reviewing the entire article has become the most expensive human cost in the whole process.

If our products or content impose excessive “verification costs” on users, that constitutes a poor user experience (UX).

Therefore, it is recommended that all AI-assisted content production adhere to a set of “Highest Standard Publishing Etiquette.” This is not only a moral appeal but also a means to establish a brand moat:

In order of respect from low to high, we should provide:

- Permission Statement: Clearly inform the information recipient that the content is AI-assisted and obtain user consent.

- PR Pair (Prompt + Response): Accompany the content with the prompt used to generate it. This is akin to open-source code, allowing readers to have the ability to “reproduce” and “verify.”

- Human Summary/Rewriting: This is the highest level of etiquette. The author must personally write the core viewpoints or summary, demonstrating that I have invested “thought power” based on AI.

Enterprise Metrics: Viewing Human-AI Collaboration Rate Like Automation Rate

In the industrial era, we measured efficiency with “automation rate.”

In the field of knowledge production, product managers and business leaders should not only look at “output volume” but also focus on a new metric—“Human-AI Collaboration Rate.”

1. Definition and Calculation Criteria

When implementing in enterprises, one can choose one of the following three criteria (which must remain consistent):

- Time Method: AI execution time / (AI + human total time). Suitable for consulting, planning, and other intensive cognitive tasks.

- Output Method: AI generated word count or tokens / (AI + human total words or tokens). Suitable for SEO articles, code generation, etc.

- Step Method: Weighted number of steps completed by AI / total weighted steps. Suitable for standardized SaaS workflows.

2. Beware of “Inflated Rates”: Binding with Quality Metrics

Collaboration rate is not better the higher it is. A 100% collaboration rate usually indicates 100% garbage content. We must view the collaboration rate in conjunction with quality metrics:

- Quality Constraints: Fact error rate (per thousand words), human review time, reader feedback (error rate/satisfaction).

- Governance Principles: In dashboards, the “collaboration rate—quality—output cycle” must be displayed in three dimensions.

3. Reference Target Ranges

Different types of tasks have different reasonable “Human-AI Collaboration Rate” ranges:

- Data Review Type (70%-85%): Emphasizes scale and structure, with AI as the main force and humans as gatekeepers.

- Opinion Commentary Type (40%-60%): Emphasizes unique positions and judgments, with AI as only an assistant.

- Compliance/Risk Control Type (20%-40%): Emphasizes responsibility and red lines, requiring deep human involvement.

Conclusion

AI should not be a replacement for humans but an amplifier.

In this era of information overload, what is scarce is no longer text but credibility and unique viewpoints. For product managers, when designing products or workflows, the goal should not be blind “dehumanization” but rather the design of more elegant HITL interactions.

Only when we firmly hold the “decision-making power” and “responsibility” in our hands and quantify the proportion of human-AI collaboration can the efficiency dividends of AI truly transform into high-quality productivity.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.