Analyzing the Truth Behind Claude’s ‘Open Source Leak’

The recent discussions about Anthropic’s Claude ‘open source leak’ have stirred significant interest. However, the truth may be more complex than it appears. Technically, this incident primarily exposes front-end tool layer code rather than the core model; commercially, the real moat for large models lies in data and infrastructure. For developers, this leak offers a glimpse into the engineering practices of top AI companies, while the impact on ordinary users is minimal. More importantly, the incident reflects a deeper conflict between personalization and stability in AI products.

I. Assessing the ‘Truthfulness’

Most of the information circulating is unconfirmed leaks or second-hand messages. Many so-called ‘open source codes’ might actually be:

- Early versions / fragments

- Speculative reproductions (self-written by others)

- Modified model weights / interface layers

The probability that the complete, usable, reproducible core model of Claude has been ‘fully open-sourced’ is actually quite low.

Technical Reasons for the Leak

Anthropic released the Claude Code npm package, including the source maps (.map files). These source maps contain uncompressed source code (TypeScript / TSX). This is a completely possible engineering accident, not uncommon in the industry.

In simple terms:

- Front-end / Node projects generate compressed code + .map files after building.

- The .map file is intended for debugging.

- If accidentally made public → others can reverse-engineer to approximate the original source code.

However, claiming this equals fully open-sourcing Claude is a serious exaggeration. A particularly misleading statement is: ‘It’s basically equivalent to exposing the entire project’s complete source code.’

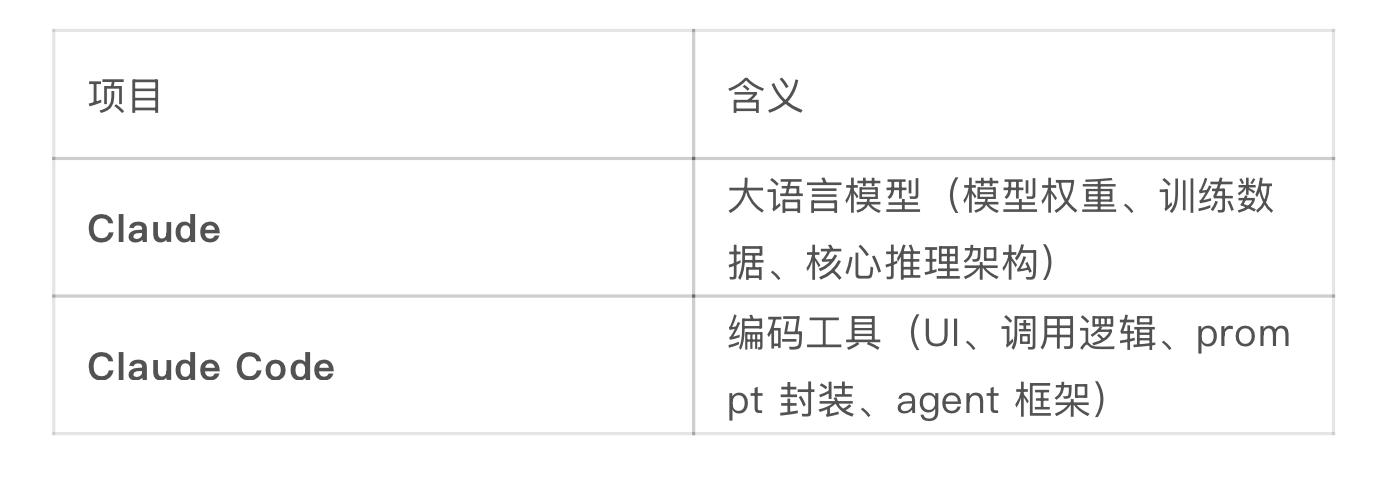

Key Misconception: Claude ≠ Claude Code

If the leak is valid, what has been leaked is: tool layer code rather than model weights, training data, or core inference architecture.

II. Potential Impacts if Partially True

1) Technical Aspects

- It may help researchers better understand architectures and training methods similar to Claude.

- It could accelerate the development of the open-source community (e.g., comparable model development).

2) Commercial Aspects

- There may be some pressure on Anthropic.

- However, the real barriers for large models typically lie in: data, training scale, and infrastructure, not merely ‘code’.

3) Security Aspects

- If there is indeed a substantial capability leak, it could be used to bypass security restrictions.

- This is a highly sensitive point for AI companies.

4) Value Aspects (Useful for Developers)

- Prompt engineering (system prompt design)

- Tool use / agent invocation methods

- IDE / coding agent interaction logic

- Anthropic’s engineering best practices

III. Why Are There So Many ‘Leak Rumors’?

The AI industry currently has several characteristics:

- The models themselves are ‘black boxes’, making external validation difficult.

- There is fierce competition between open-source and closed-source models (e.g., Meta’s Llama series vs. closed models).

- The community easily confuses ’things like Claude’ with ‘being Claude’.

This incident touches on three hot topics:

- Leak → emotional amplification

- Claude → top-tier model

- Open-source → community excitement

IV. Overall Judgment

A more accurate conclusion is:

There is noise and exaggeration, but not necessarily substantial core leakage.

Even if some materials have leaked, it is unlikely to allow others to ‘replicate a Claude’.

Large models cannot be replicated with just a repository.

So, for ordinary people or developers, how much value does this actually hold? This is a more interesting and worthy area to explore than the leak itself.

V. Real Value for Ordinary People or Developers

In fact, the most valuable aspects of Anthropic’s Claude Code usually include:

- System prompts

- Tool calling rules

- Multi-turn reasoning structures (agent loop)

- Error recovery strategies (retry / fallback)

1) For Ordinary Users

Essentially, it is about how to use Claude more intelligently.

However, to be frank: it has almost no direct use for ordinary users. Why?

- Ordinary users mostly engage in inputting prompts for dialogue generation.

- The leaked prompts often pertain to IDE plugins, automatic code modification, project-level understanding.

- Ordinary chat usage is unlikely to benefit from these.

Moreover, these prompts do not work in isolation, but rather:

User input → agent loop → call tools → feed back to model → make decisions.

A good prompt is not a single sentence; it involves: context control, token budget, error handling strategies.

Having the prompt is like having the recipe for sweet and sour pork, but lacking the kitchen and ingredients.

2) For Developers (the true beneficiaries)

Developers can gain at least four layers of value from this leak:

1) Direct insight into how Anthropic writes system prompts

- How to constrain model behavior (e.g., preventing random code changes)

- How to design tool schemas

- It’s like standing on the shoulders of giants, reducing trial and error time.

2) Understanding the Agent Paradigm

- Many working on AI Agents struggle with ‘how to make the model act step-by-step like a human’.

- The leaked designs will showcase: task breakdown methods, when to call tools, and when to stop the loop.

3) Learning industrial-level prompt engineering

- Long, structured prompts

- Clear rules + numerous boundary conditions

- This is entirely different from the ‘prompt tricks’ found online.

4) Borrowing engineering best practices

Tool invocation, error recovery, multi-turn reasoning.

VI. Conclusion

The most powerful core, the truest moat, lies in the capabilities of the Claude model itself.

Using the same set of prompts:

- Using Claude → very satisfactory results.

- Using other ordinary open-source models → may not work at all.

Outsiders watch the excitement, insiders learn the methodology.

Experience leakage is not capability leakage.

VII. Side Note: The ‘Personalization Pain’ Behind the 400 Error

While writing this article, Claude released Claude Code v2.1.92, introducing a cool new feature — Ultraplan.

However, more interestingly, some developers attempted to modify the system prompt for a more personalized experience.

As a result, Anthropic’s backend directly returned a 400 error.

Some believe this was a patch in response to the earlier ‘Claude Code source leak incident’.

This raises the question:

Users pay a hefty subscription fee yet cannot freely define AI behavior — leading to significant skepticism in the developer community.

Why is the 400 Error Actually Necessary?

In Anthropic’s design, the system prompt is not an ordinary prompt, but rather:

The scheduling hub + behavior constraints + workflow description.

It typically serves to:

- Define roles (you are a coding agent)

- Specify behaviors (when to modify code, when not to)

- Tool invocation rules (when to use which tools)

- Safety constraints (what cannot be done)

When we ‘add a bit of personalization’, for example:

We may inadvertently cause these disruptions:

1) Interrupting decision logic

The agent’s original flow is: analyze → decide → call tool → return result.

A change may lead to: analyze → explain → re-explain → forget to call the tool.

2) Blurring priorities

The system prompt often contains hidden priorities (tasks must be completed first).

If you add a line like ‘prioritize making the user feel relaxed’, the model may become confused: should it complete the task or chat?

3) Disrupting format constraints

Many agents rely on strict formats (JSON outputs, tool invocation structures).

Changing the tone might directly lead to natural language outputs → causing program parsing failures.

Why Are Paying Users More Frustrated?

The expectation is: ‘I paid, so I should be able to customize it.’

But the reality is: you are modifying the system core, not just the skin.

This is like wanting to change the theme or skin of a phone, but ending up modifying the iOS kernel, causing the system to crash.

This Reflects a Deeper Issue

All systems like Claude face a contradiction:

Flexibility vs. Stability

The more open the prompt → the more flexible → the easier it is to lose control.

Anthropic actually leans towards being ‘stable’.

Correct Personalization Methods (instead of hard modifications to the system prompt)

If you really want to modify, do not touch the system prompt, but rather:

Method 1: Place it in the user prompt

‘Please explain the following code in a more relaxed tone: xxx’

This way, it won’t disrupt the system logic.

Method 2: Use ‘soft constraints’ instead of ‘hard rewrites’

‘You must chat like a friend.’

‘You can be a bit more natural, as long as it doesn’t affect task execution.’

Method 3: Layered Control

Break the prompt into:

- System (untouched)

- Developer (slight control)

- User (personalization)

My Thoughts on This Matter

At its core, AI products have yet to mature in terms of ‘controllable personalization’.

In summary, the core conclusion of this article is:

The source leak of Claude Code is an engineering accident, not a model leak; it holds learning value for developers, but limited impact on ordinary users;

The real concern is not about ‘replicating Claude’, but rather that the industry’s understanding of AI controllability remains immature.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.