Introduction

The dominance of the Transformer architecture is under threat! A new AI model, SubQ, has emerged with a Subquadratic Sparse Attention (SSA) architecture, achieving a context cost of only 5% of Opus, with a reduction in computational requirements by a factor of 1000.

The Emergence of SubQ

SubQ is the world’s first model based on a fully subquadratic sparse attention architecture, capable of handling a context of up to 12 million tokens.

The core advantage of SubQ lies in its SSA architecture, which dynamically selects points of attention based on content, avoiding the blind computation of all token relationships. Compared to Transformers, its computational load is reduced by 1000 times.

Experimental results show that for a context of 1 million tokens, SubQ is 52 times faster than FlashAttention, costing less than 5% of Claude Opus.

The company behind this architecture, Subquadratic, is based in Miami and consists of only 13 employees. AI expert Bindu Reddy remarked, “If this is true, the valuations of Anthropic and OpenAI would drop to zero!”

Others have stated that this is the true way forward for scaling large language models (LLMs).

The Original Sin of Transformers

In 2017, Google’s paper “Attention is All You Need” established the dominance of the Transformer architecture. Over the past nine years, all cutting-edge large models, from GPT to Claude to Gemini, have been built on the same foundation: dense attention mechanisms.

For a long time, the way Transformers operate has been very aggressive, requiring each token to compare with every other token in the sequence. This mechanism has trapped it in a “quadratic complexity” quagmire, where increasing the context by a factor of two quadruples the computational cost.

This explains why almost all LLMs are limited to around 1 million tokens; it’s not that the technology can’t handle longer contexts, but that the costs become prohibitive.

SubQ’s emergence fundamentally changes this equation.

The Birth of SSA Architecture

Less is More

The core breakthrough of SubQ is called SSA—Subquadratic Sparse Attention. Its approach is surprisingly simple: it no longer requires every token to compare with all other tokens.

Since most attention weights in a trained model are close to zero, why compute them? SSA selects the truly relevant positions based on content for each query and only computes attention at those positions, skipping over 99% of unnecessary calculations.

Key Features of SSA

- Linear Scaling: The computational load grows with the number of selected positions, not the entire sequence length. Doubling the context only doubles the cost, rather than quadrupling it.

- Content-Dependent Routing: The model decides where to look based on semantics, not position. Key information can be found whether it is the 3rd token or the 1,100,000th token in the sequence.

- Precise Retrieval: Unlike recurrent models that compress information into a fixed state, SSA retains the ability to retrieve information accurately from any position.

In essence, SSA does not make dense attention calculations faster; it reduces the amount of attention computation the model needs to perform.

The reduction in computational load directly translates to speed.

Performance Metrics

SubQ’s released data is striking:

At a length of 1 million tokens, SSA is 52.2 times faster than standard dense attention plus FlashAttention-2.

At 128,000 tokens, it is 7.2 times faster; at 256,000 tokens, 13.2 times; and at 512,000 tokens, 23 times faster. Clearly, the longer the context, the more pronounced the advantage.

This is a direct reflection of SSA’s linear scaling—dense attention becomes slower with longer contexts, while SSA becomes more cost-effective.

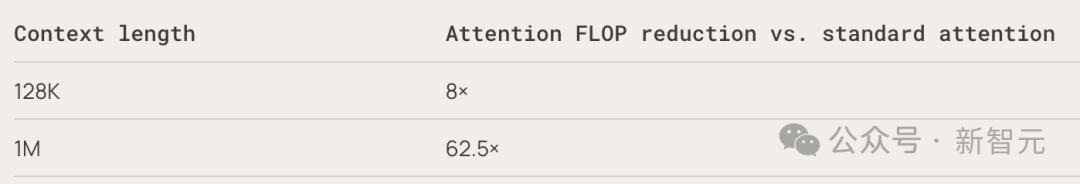

In terms of computational consumption, at 1 million tokens, attention FLOPs are reduced by 62.5 times. At 12 million tokens, this number skyrockets to nearly 1000 times.

Regarding costs, Subquadratic provided a clear comparison: in the RULER 128K benchmark test, SubQ costs $8, while Opus costs $2600, showcasing a cost difference of 300 times.

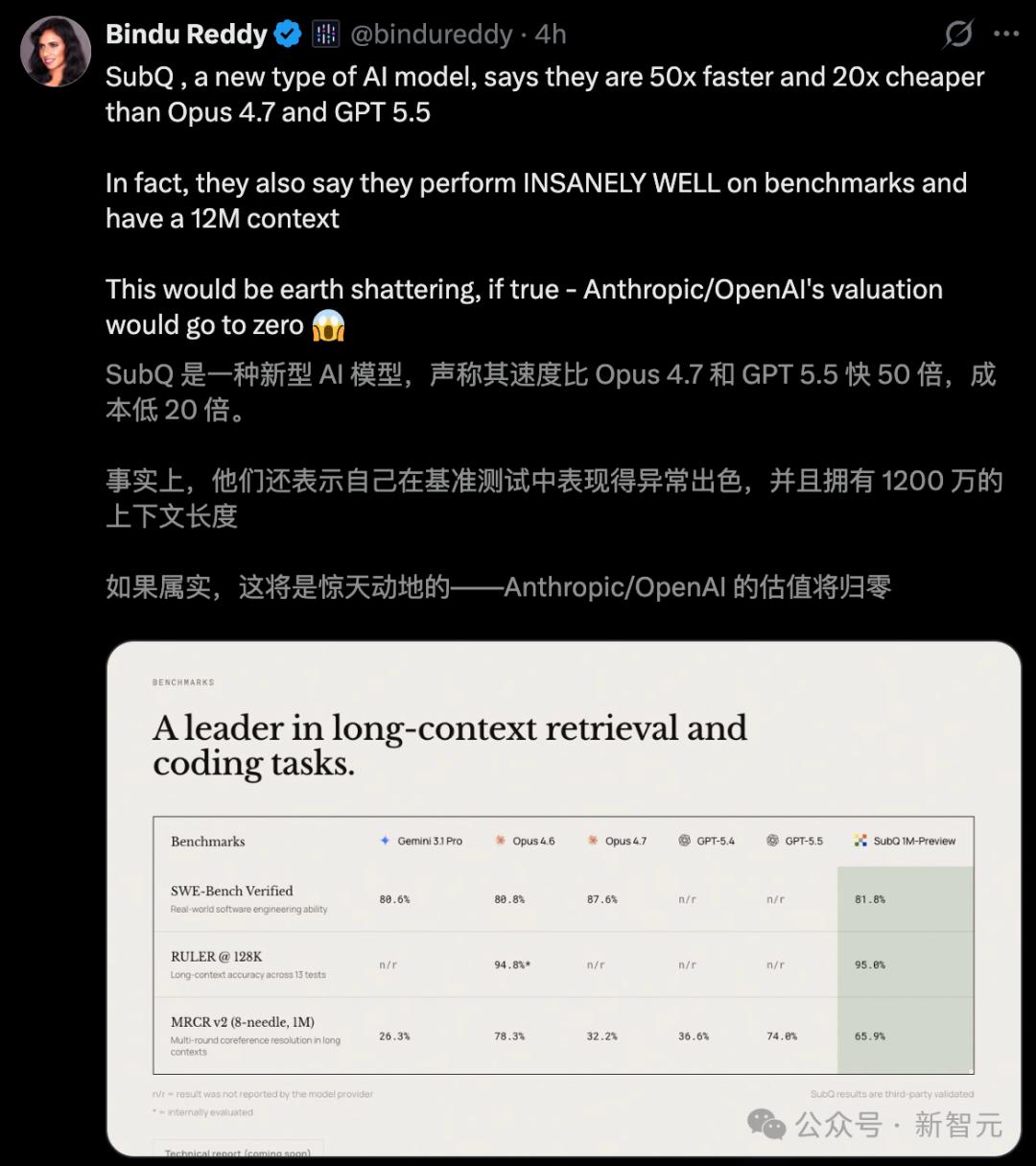

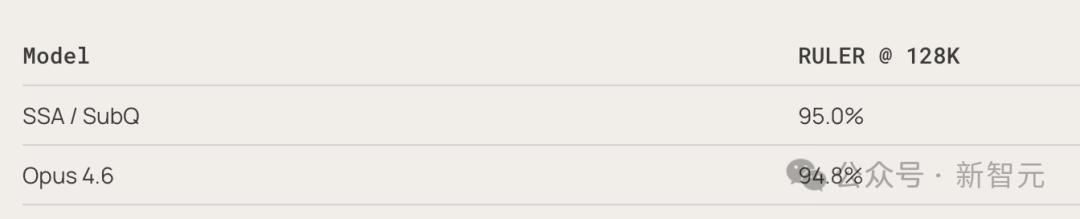

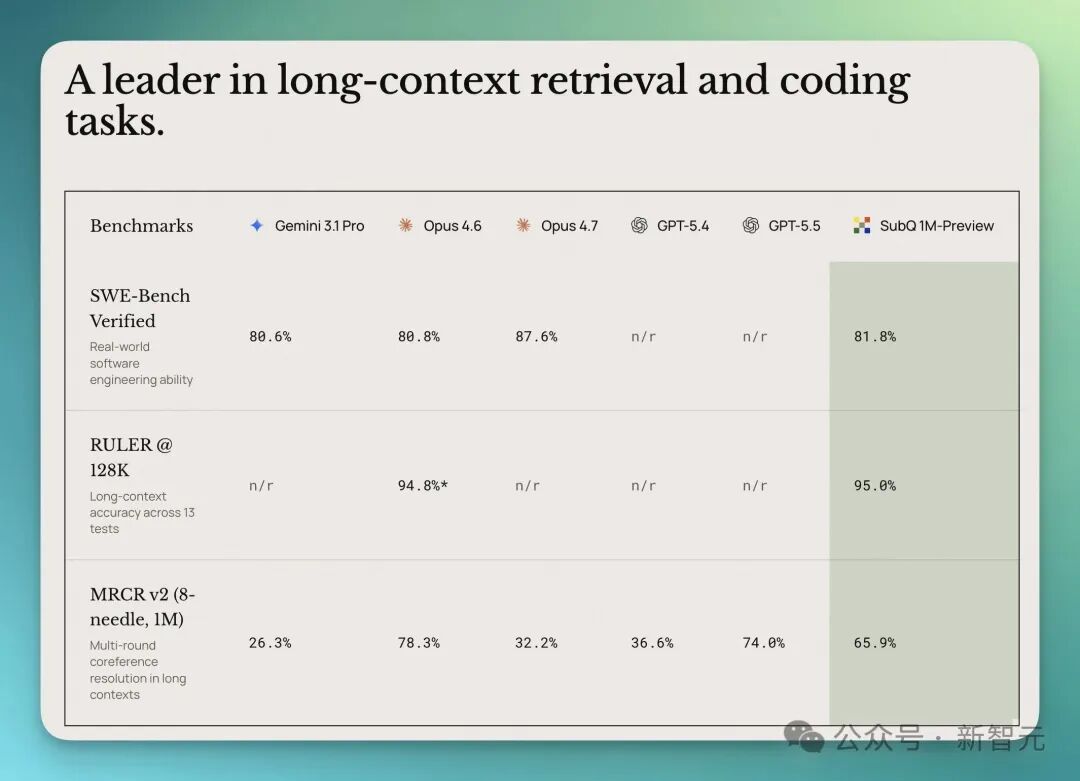

Crucially, these speed and cost advantages do not come at the expense of accuracy. In the RULER 128K benchmark test, SubQ scored 95%, while Opus 4.6 scored 94.8%.

In SWE-Bench Verified (code engineering), SubQ scored 81.8, surpassing Opus 4.6’s score of 80.8. In MRCR v2 (long context retrieval), SubQ achieved 65.9%, while Opus 4.6 scored 78%, far exceeding GPT 5.4’s 39% and Gemini 3.1 Pro’s 23%.

When viewed together, these numbers are striking—this seed-stage company, using less than 5% of Opus’s cost, matches or even surpasses the flagship models of Anthropic and OpenAI across multiple core benchmark tests.

With a single prompt, SubQ can handle ultra-long information of 12 million tokens: whether it’s an entire codebase, months of PR records, or the state of a long-running AI agent, it can manage it all at only one-fifth of the original cost.

If all of this proves true, it would mark the most significant architectural breakthrough since the advent of Transformers.

A Startup with 13 Employees Aiming to Disrupt Transformers

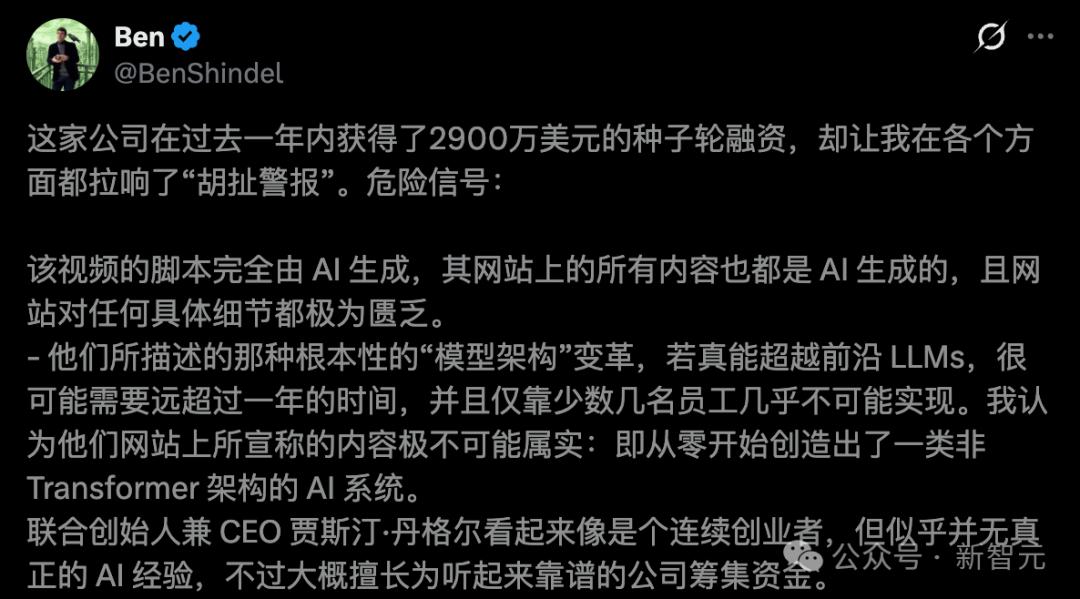

Subquadratic was founded in 2024, raising $29 million in seed funding with a valuation of $500 million. It has two co-founders: CEO Justin Dangel and CTO Alexander Whedon.

The research team consists of 11 PhDs from Meta, Google, Oxford University, Cambridge University, and Adobe. Notably, the company was previously called Aldea and focused on voice models before pivoting to attention architecture research.

Three product lines were launched simultaneously:

- SubQ API: Full context interface for 12 million tokens

- SubQ Code: Command-line coding agent that can process an entire codebase at once

- SubQ Search: Deep research tool, initially free

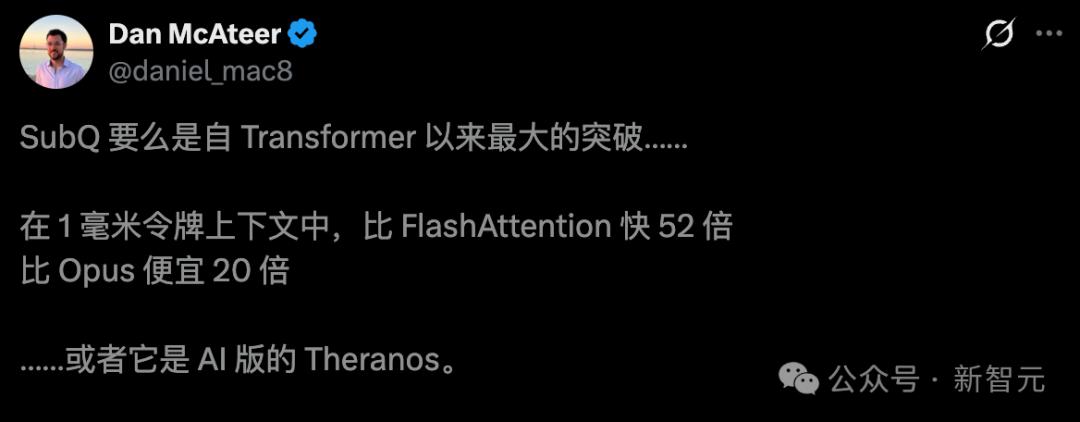

Community Reactions: Terminator or AI Version of Theranos?

Within hours of SubQ’s release, the AI community split into two camps. AI expert Dan McAteer summed up the sentiment: SubQ is either the biggest breakthrough since Transformers… or the AI equivalent of Theranos.

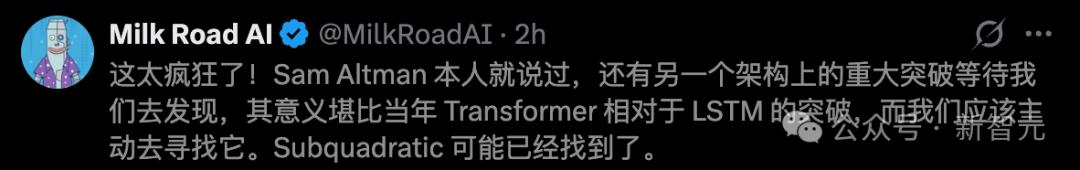

Supporters are numerous, with some claiming this is one of the craziest AI releases of 2026. Subquadratic may have found a significant architectural breakthrough as mentioned by Ultraman.

However, skeptics are equally vocal, with some calling it a “scam company,” especially after reviewing the founders’ LinkedIn profiles.

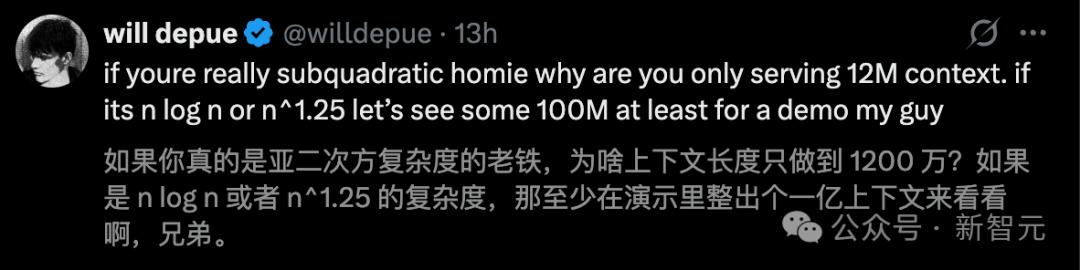

Former OpenAI researcher Will Depue pointed out that “SubQ is almost certainly based on Kimi or DeepSeek’s sparse attention fine-tuning.”

The AI community has seen many stories of “release equals peak”; the gap between the presentation at the launch and real-world deployment can be vast. However, the stakes are high, and the industry cannot afford to take this lightly.

The true answers may only emerge once the technical reports are made public and independent benchmarks are replicated.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.